The AI industry has spent two years debating whether structured expertise actually makes agents better — or whether bigger models and better prompts are enough.

That debate just ended.

A team of researchers from Stanford, CMU, Berkeley, Oxford, and BenchFlow published SkillsBench — the first peer-reviewed academic benchmark measuring whether Agent Skills actually improve AI performance. They tested 84 tasks across 11 domains, 7 model-agent configurations, and 7,308 total trajectories.

The results aren't subtle.

Curated Skills Raise Performance by 16 Percentage Points

Across every model and agent configuration tested, curated Skills improved pass rates by an average of +16.2 percentage points. That's not marginal optimization. That's the difference between an agent that fails most tasks and one that's production-viable.

The improvement held across the board — from Claude to GPT to Gemini, from code editors to general-purpose agents. The researchers controlled for model quality, task difficulty, and Skill selection. The signal was consistent: structured procedural knowledge makes agents measurably better at real work.

For anyone who's ever been told "just use a better prompt" or "the model will figure it out" — this is the empirical answer. It won't.

Healthcare Showed the Largest Gains — by Far

Of all 11 domains tested, Healthcare showed the largest improvement: +51.9 percentage points — jumping from 34.2% to 86.1% pass rates when agents had access to curated Skills.

Manufacturing was second at +41.9pp. Software engineering, where models already have strong pretraining coverage, showed the smallest gain at +4.5pp.

The pattern is clear: the more organizational expertise matters in a domain, the bigger the impact of Skills. Healthcare isn't just any domain — it's the proving ground for whether structured AI intelligence works where it matters most. Independent research now confirms what we've seen in production with real practices: AI agents with curated healthcare Skills don't just perform better. They perform dramatically better.

For context, a 52-point improvement in healthcare means the difference between an agent that gets eligibility verification wrong more often than right — and one that handles it reliably enough for a real medical practice to trust.

AI Can't Write Its Own Playbook

Here's the finding that should concern anyone betting on prompt engineering alone.

When the researchers asked models to generate their own Skills before solving tasks, performance actually dropped by -1.3 percentage points on average. Not flat — negative. The models got worse.

Only one model (Claude Opus 4.6) showed marginal improvement (+1.4pp) with self-generated Skills. GPT-5.2 Codex degraded substantially (-5.6pp). The paper's conclusion is blunt: "models cannot reliably author the procedural knowledge they benefit from consuming."

This is the strongest possible argument for human-curated, organizationally-authored Skills. Your procedures, your decision logic, your edge cases — they need to come from the people who actually do the work. AI can consume and follow structured expertise brilliantly. It cannot create that expertise from scratch.

The "just dump your docs into the context window" approach isn't just inefficient. Research now shows it's counterproductive.

Less Is More in Skill Design

The benchmark revealed something we'd observed in practice but couldn't prove until now: focused Skills dramatically outperform comprehensive documentation.

Tasks with 2–3 focused Skills showed +18.6pp improvement. Tasks with 4+ Skills? Only +5.9pp. And comprehensive, monolithic Skills — the "put everything in one document" approach — actually hurt performance by -2.9pp.

The researchers attribute this to cognitive overhead and conflicting guidance. When agents receive too much instruction or instruction that's too broad, they struggle to extract what's relevant. Detailed but compact Skills that address specific task classes outperform documentation dumps every time.

This validates a core design principle behind myAgentSkills: skills should be modular, focused, and composable. Not bloated knowledge bases masquerading as expertise.

Smaller Models + Skills Beat Larger Models Without Them

The cost implications of this benchmark are significant.

Claude Haiku 4.5 with Skills scored 27.7% — outperforming Claude Opus 4.5 without Skills at 22.0%. A smaller, cheaper model with the right structured expertise outperformed a larger, more expensive model running blind.

The paper's Pareto frontier analysis confirms this isn't an anomaly: Skills shift the entire cost-performance curve upward. Organizations don't need to buy bigger models to get better results. They need to give the models they already use the procedural knowledge to do the work correctly.

For any team evaluating AI spending, this reframes the ROI conversation entirely. The bottleneck isn't model intelligence. It's context.

The Quality Bar Matters Enormously

One finding that doesn't get enough attention: the benchmark analyzed 47,150 publicly available Skills and found the ecosystem-wide average quality score was 6.2 out of 12. The researchers only used top-quartile Skills (scoring 9 or above) in their benchmark — and even then, the improvements were dramatic.

This means the average publicly available Skill isn't good enough to move the needle. Quality variance determines whether Skills help or hurt.

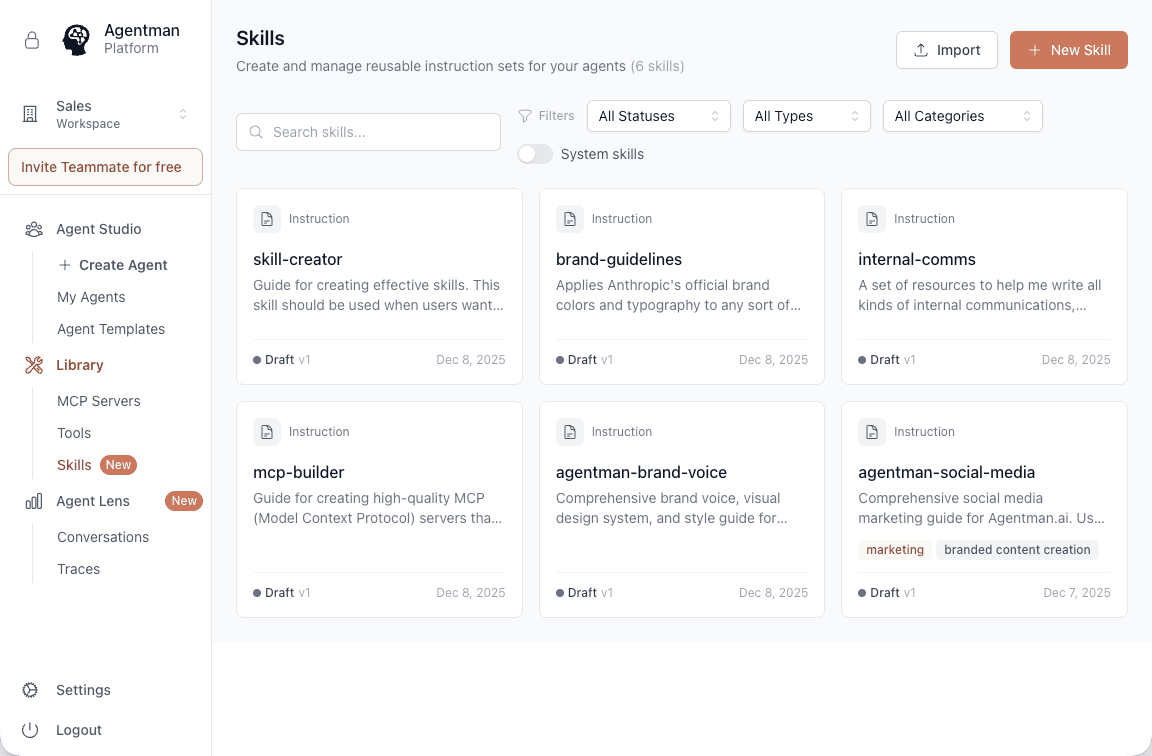

This is exactly why we built myAgentSkills as a curated, production-ready registry rather than an open marketplace where anyone uploads anything. The 65+ skills in our library are authored by practitioners, reviewed for accuracy, and structured to the Anthropic Agent Skills specification. Not every skill on the internet clears that bar. Independent research now proves the bar matters.

What This Means for the Industry

SkillsBench establishes several things that were previously claims. They're now empirically validated findings:

Skills are a distinct intelligence layer. The paper explicitly defines them as "structured packages of procedural knowledge" — separate from prompts, RAG, and tool documentation. Table 1 in the paper draws clean lines between these approaches. Skills are not fancy prompts. They're a different category of AI input.

Portability works. The benchmark tested Skills across 7 different model-agent configurations. The improvements were consistent regardless of the underlying model or agent harness. Portable, standardized Skills built on open specifications deliver measurable value everywhere they're deployed.

The proprietary lock-in approach is solving the right problem the wrong way. Jasper's Brand IQ, Writer's Knowledge Graph — they correctly identified that AI needs organizational context. But locking that context inside a single vendor means it only works in one place. The research validates what MCP and open standards make possible: expertise that travels.

From "A" Agent to "Your" Agent — in Seconds

Here's something the benchmark doesn't measure but production experience makes obvious: the speed at which Skills transform a generic agent into your agent.

Every AI platform gives you "a" agent — capable, general-purpose, trained on the internet. But "a" agent doesn't know your intake procedures. It doesn't know your brand voice. It doesn't know the exception your biggest client requires on every invoice.

Skills are what close that gap. And Agentman is the agent-building platform designed from the ground up to support them.

Build your Skills anywhere — in Claude, ChatGPT, or directly in myAgentSkills. Then attach them to your Agentman agent. In seconds, it goes from "a" agent to your agent. An agent that follows your procedures, speaks in your voice, and handles your edge cases — because the expertise was authored by the people who actually do the work.

The SkillsBench data shows what happens when you give any agent structured expertise: +16.2 percentage points average improvement, +51.9pp in healthcare. Now imagine that improvement applied to an agent purpose-built to consume Skills — one where the entire architecture is designed around structured expertise as a first-class input, not an afterthought.

That's what Agentman delivers. And that's what the research validates.

What We're Building on This

We've been building agents that manage structured expertise — SOPs, decision logic, procedures — outside the agent for over a year. That work taught us something fundamental: the intelligence layer needs to be separate from the agent itself, portable, and maintained by the people who actually do the work. myAgentSkills is the productization of that insight — 65+ production-ready skills across healthcare, sales, marketing, customer success, legal, and engineering, all built on MCP, all portable across Claude, ChatGPT, Cursor, and beyond.

We didn't have peer-reviewed research to point to when we started building this way. We had production experience, customer results, and a thesis: that the gap between smart AI and useful AI is structured expertise, and that expertise should be portable.

SkillsBench validates that thesis with data we didn't generate and methodology we didn't design. Independent researchers, rigorous methodology, reproducible results.

We're going to keep doing what we've been doing — building high-quality, production-ready Skills and the agent platform designed to use them. The research confirms we're on the right track. Now we have the numbers to prove it.

Try it yourself. Build a Skill in Claude or myAgentSkills, attach it to an Agentman agent, and see the difference structured expertise makes. Start free at myagentskills.ai.

The SkillsBench paper is available at arxiv.org/abs/2602.12670. Full methodology, results, and reproducibility details are published openly.