A Prompt Is a Question. A Skill Is a Procedure.

You know that colleague who's incredible at their job but absolutely cannot write down how they do it? You ask them to document the process and they hand you a sticky note that says "just check the thing and then do the stuff."

That's what a prompt is to AI. A sticky note.

And somehow, entire enterprises are running their AI strategy on sticky notes.

The Tuesday Prompt Problem

Here's a story that plays out at every company using AI. Someone on the team — let's call her Sarah — writes a brilliant prompt on Tuesday. It's beautiful. Specific. Gets exactly the right output. She Slacks it to the team with a fire emoji.

By Wednesday, Marcus has "improved" it. He added a paragraph about tone. Removed the part about formatting. Swapped "concise" for "comprehensive" because his use case is different.

By Thursday, three versions exist in three different Slack threads. Nobody agrees on which one works. The new hire can't find any of them and writes her own from scratch.

By next quarter, Sarah has left the company. The prompt is gone. The institutional knowledge of what worked? Also gone. You're back to square one, except now you're also paying for an AI platform.

This isn't a people problem. People are doing their best. This is an architecture problem — and it's the reason the most capable AI models in history still produce work that's inconsistent, untraceable, and impossible to improve.

Three Reasons Prompts Fall Apart at Scale

At the individual level, prompts are wonderful. You're exploring. Iterating. Getting unstuck. No complaints.

But organizations don't run on individual brilliance. They run on repeatable processes. And prompts break down for three reasons that get worse over time, not better.

Everyone prompts differently. Give ten team members the same task with the same AI model and you'll get ten different outputs. Not because the model is unreliable — because the instructions are. Sarah's "be concise" is Marcus's "be comprehensive." There's no shared definition of what good looks like, what steps to follow, or what to check before hitting send.

Nobody can trace what happened. When a prompt produces a questionable output — and it will — there's no record of what instructions led to that result. No version history. No way to go back and ask "wait, who told it to do that?" In regulated industries, this isn't just awkward. It's the kind of thing that ends up in an audit finding.

Nothing gets better. That incredible prompt Sarah wrote? It's decomposing in a Slack thread alongside 47 reaction emojis and a gif of a dancing cat. Nobody reviewed it against edge cases. Nobody tested it systematically. Nobody made it better. Prompts don't compound. They evaporate — like a really good idea you had in the shower but didn't write down.

A Skill Is Not a Fancier Prompt

Let's clear this up, because "skill" sounds like it could be marketing language for "prompt with extra steps." It's not.

A prompt encodes a request. "Hey AI, analyze this document."

A skill encodes a procedure. "Here are the twelve metrics to extract, the thresholds that trigger a flag, how to handle missing data, the three risk categories to evaluate, and the exact format for the output — which needs to match our internal review template because Janet in compliance will send it back if it doesn't."

That's not a subtle difference. It's the difference between calling a friend who's "pretty good with numbers" and hiring an accountant who knows your tax situation.

A skill packages four layers that a prompt simply cannot carry:

Domain knowledge — the context your AI needs but the internet didn't teach it. A skill for insurance denial appeals doesn't just read the denial letter. It knows which codes are worth fighting, which payer quirks apply, and what documentation actually strengthens your case. It knows this because your team knows this, and someone took the time to write it down properly.

Procedures — not "review and summarize" but the actual sequence. Extract these fields. Cross-reference against these criteria. If this condition is met, escalate. If that field is missing, flag it and continue. The kind of step-by-step logic that lives in your best employee's head and nowhere else.

Validation rules — the quality checks that catch mistakes before a human has to. Are all required fields present? Do the numbers add up internally? Does the recommendation actually align with company policy, or did the AI just confidently make something up? (It does that.)

Output formats — because nothing kills productivity like getting a perfect analysis in the wrong shape. The skill produces output that slides right into your existing workflow. Your memo template. Your spreadsheet schema. Your Slack summary format. No copy-paste reformatting.

Skills Behave Like Assets, Not Artifacts

Here's where it gets interesting. Skills don't just produce better outputs — they behave like something your organization actually owns.

They're version-controlled. Every edit is tracked. You can see what changed, when, and who changed it. You can roll back to last week's version when someone's "improvement" turns out to be the opposite. This is table stakes in software engineering and has been completely absent from how organizations manage their AI instructions.

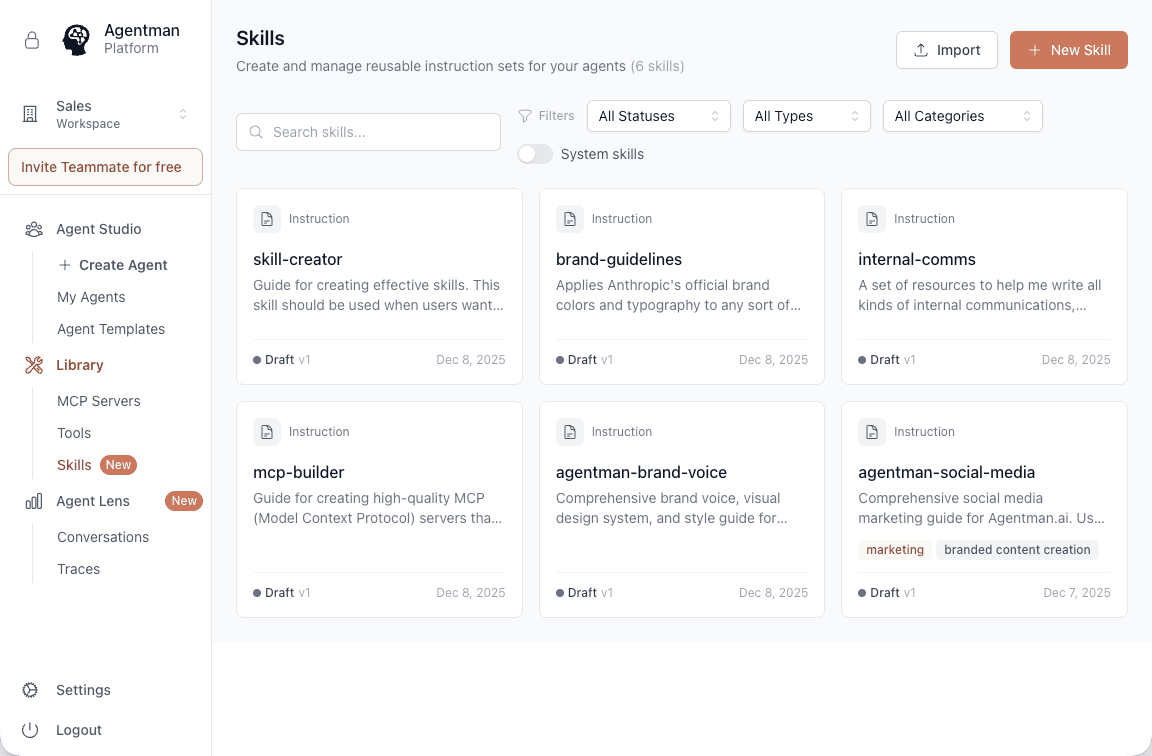

They're composable. Skills can reference other skills. Your quarterly review skill calls a financial analysis skill, a competitive landscape skill, and a formatting skill — each maintained independently, each improving on its own schedule. You build a library instead of a landfill.

They actually get better. Because skills are durable and shared, they accumulate feedback. When an edge case pops up — and edge cases always pop up — you update the skill once and every future execution benefits. The investment compounds. It's the opposite of Sarah's disappearing Slack prompt.

What This Looks Like in Practice

Let me make this concrete with a real example.

The prompt approach: An analyst pastes a Confidential Information Memorandum into ChatGPT and types "summarize this CIM and highlight key risks." The model produces something... fine. Maybe it catches the revenue concentration. Maybe it misses the working capital trend entirely. The output is a wall of text that the analyst spends 45 minutes reformatting for the IC memo. The next analyst prompts differently and gets completely different results. Nobody notices the inconsistency until the partner does.

The skill approach: A CIM Analyzer skill encodes your firm's actual analytical framework. It extracts revenue, EBITDA, and growth rates. Flags customer concentration above 20%. Identifies working capital anomalies. Checks management rollover terms. Structures everything to match your IC memo template — executive summary up top, risk factors in a standardized table, supporting details organized by section.

Same model. Same document. One approach gives you a coin flip. The other gives you a process.

From Renting Intelligence to Owning It

Here's the real shift, and it's bigger than just "better outputs."

Prompts are rented intelligence. You're paying for a model and hoping the person typing the instructions gets it right every time. The knowledge lives in individual heads and scattered chat histories. When someone leaves, so does the institutional knowledge of how to get good results. You didn't lose an employee — you lost a human prompt library.

Skills are owned intelligence. Your procedures, validation rules, and domain expertise are captured in durable, portable, version-controlled assets. They belong to your organization. New team members inherit the accumulated expertise on day one instead of spending three months figuring out "how we do things around here."

This is the difference between using AI as an expensive novelty and using it as actual infrastructure.

Go Build One

You don't need to take my word for any of this. Take a process your team repeats every week. The one where everyone does it slightly differently and nobody's sure whose version is correct. Document the steps, the decision criteria, the output format, and the weird edge cases that only come up on Fridays.

Package it as a skill. Run it twice. Compare the results to what you were getting from ad-hoc prompting.

The consistency will be obvious. And Sarah's next great idea? It'll still be there on Monday.

Start building at myAgentSkills.ai